Believe it or not, you have probably participated in hundreds of A/B tests. Proving clear, actionable, and relevant data for digital marketers, A/B tests are used by companies both large and small to measure the effectiveness of everything from minor variants of web page components, to email copy, to layout redesigns.

Facebook are now said to run over a thousand A/B tests per day, while Google is said to have run dozens of split tests on details as minute as the shade of blue in their logo lettering. Even Barack Obama used A/B testing extensively when trying different CTA buttons for his website during his first Presidential campaign in 2008.

While schools may not have the resources to devote to investing in A/B testing on this scale, they can still use the technique to make major gains in their student lead generation efforts. Keep reading to find out how.

What is A/B Testing and Why is it so Effective?

The true brilliance of A/B testing lies in its simplicity. Also known as split testing, an A/B test involves creating two variants of something – an A version and a B version – and then conducting a controlled experiment in which they are both delivered to a small sample of your audience to determine which is more effective.

Digital marketing experts typically recommend that you test one variant – a Call to Action, an email subject line, etc. – at a time, rather than creating drastically different versions of your marketing material. The theory is that this will allow you to know with some deal of certainty that the element you have changed is the reason for any difference in your results. Occasionally, however, it may be appropriate to perform multivariate testing, a form of split testing which involves testing more than one variant at a time.

There countless examples of A/B testing improving the effectiveness of digital marketing campaigns across a range of industries. One particularly illuminating case study in the education sector was conducted by American online learning provider Khan Academy in 2014. At this time, the organization had added a ‘sneak-peak’ feature to one of their courses, which provided prospective students with a short snippet of the program content before they signed up.

While some leads were eager to learn more after viewing the clip, others were confused or put off by the content. To gain more insight into the issue, Khan created a variation of the program page in which the sneak-peak feature was hidden. They then ran a one-week A/B test in which 50% of users were shown the version of the page without the feature, and the other half were shown a ‘control’ version which included the sneak-peak option.

The results were conclusive. Removing the sneak-peak resulted in a 27.46% increase in conversions, while students who did not see the feature were also more likely to progress further in the course and complete it after signing up.

As a result, the institution removed the feature, confident in the fact that it was detrimental both to their recruitment efforts and the overall student experience. Cases like this illustrate how well-thought out, properly conducted A/B tests can produce definitive, actionable results.

What Schools Can Use A/B Testing For?

In a comprehensive digital marketing strategy, A/B testing for student recruitment can be useful across a number of different channels. And because these tests are often used to measure the significance of very small variations in your content, you’ll find no shortage of things that can be tweaked in order to improve your conversion rates and other key performance metrics. Here are just a few areas where you might consider A/B testing.

Online Forms

The forms on your website and landing pages can be difficult to get right. On the one hand, schools will want to gather as much information as possible about any prospective students entering their funnel in order to facilitate more effective follow-up. On the other, asking for too much information can be off-putting for potential leads who don’t want to spend a long time filling out a detailed form, or may even consider the details you are requesting intrusive.

A/B testing can allow you to experiment with your form content, adding and removing fields to see if they make a difference in both the amount of leads you attract and their quality. For instance, in the two forms below, an extra question regarding budget has been removed from the B version of the test.

Questions like these may provide valuable information about new leads when they arrive in the funnel, but schools need to careful that they are not asking for too much information. An A/B test should give you results that provide insight into whether the change is a positive or negative one.

You can also test different ways of laying out your form fields. For instance, you might want to see if a form that provides a list of options for students to check in answer to a question performs better than one which asks for single line answer. You may also want to see if making certain fields mandatory increases the likelihood of prospects completing them, or if it results in a decrease in conversions from leads that might be reluctant to provide certain information.

CTAs

Calls to action are another element of web and landing pages that can be particularly important to your online student recruitment efforts. A CTA needs to be eye-catching and enticing to encourage prospective students to take the next step, while also refraining from being overly pushy or inappropriate.

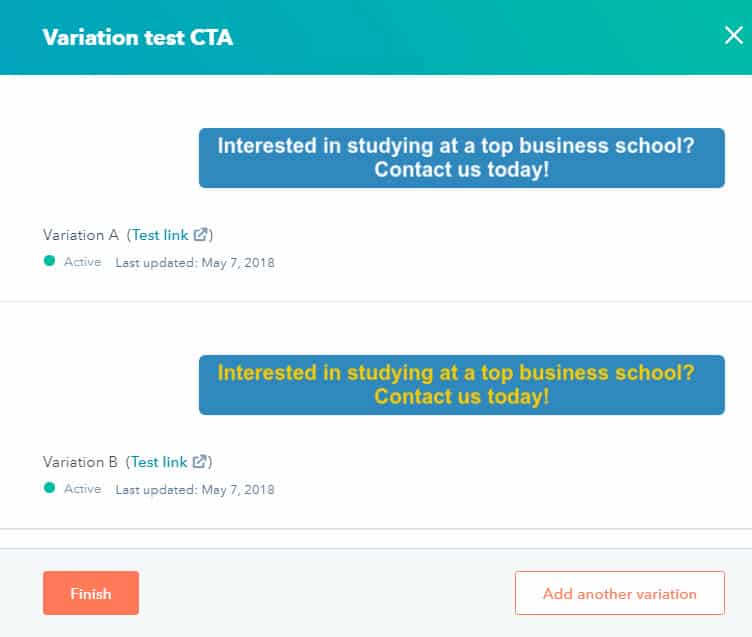

A/B testing will allow you to experiment with making changes to your CTAs in a controlled environment with minimal risk. For example, you might want to try using more irreverent or informal wording in your CTAs in order to make a stronger impression on students, or change the color or design of a CTA button to make it more visible on the page. Provided you only change a single element at a time, the results of your test will tell you with a certain degree of certainty what affect your changes will have.

Example: A simple A/B test created in Hubspot to measure the effectiveness of changing the colour of a CTA’s text. You might be surprised how much a difference small changes like this could make to your lead generation results.

Testing the placement of CTAs could also prove very fruitful. For example, Hubspot have found a lot of success since adding mid-page CTAs to their blogs.

Landing Pages or Web Pages

In addition to your forms and CTAs, you may want to use A/B testing to evaluate the effectiveness of wholesale design or layout changes to your pages. You could run tests that utilize the inclusion of different images, changes in the placement of certain elements, or even alterations to your fonts, colour scheme, or logo.

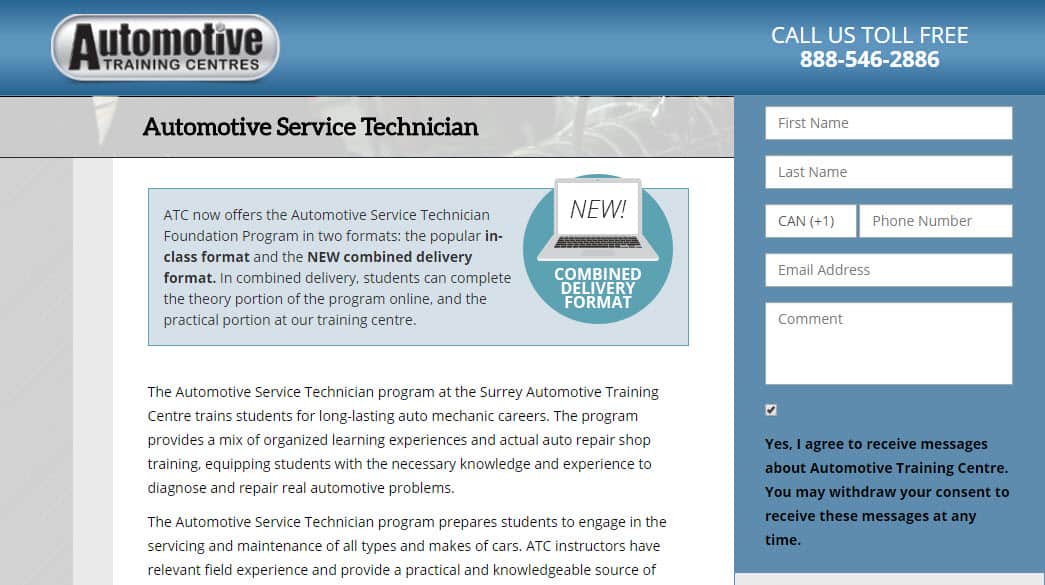

Example: Here are two alternate version of a landing page for Automotive Training Centre’s Automotive Service Technician program. The first begins with a quick introduction to the school followed by a video:

A second version, meanwhile, focuses on highlighting the combined delivery format available to students:

Which do you think would be more effective? While both have their merits, an A/B test can give you a more definitive answer. Again, unless you are testing a complete page redesign, it is usually best to test one change at a time in your layout to get a better idea of what impact it actually has on your success rates.

You may also want to use A/B testing to evaluate the content of your pages. For instance, you may be curious as to whether a page would perform better if provided more in-depth information about your programs or institution. Conversely, you might feel that it would be better to make your content shorter and more concise, or you may want to focus on a particular aspect or feature of a specific course more prominently, as ATC do with combined delivery in the example above. A/B testing will give you a clear picture of what your audience actually responds to, enabling you to better determine the proper approach to engaging with them.

Email Marketing

A/B testing can used to deliver more engaging emails, too. Whether you are creating autoresponders, event invites, or weekly or monthly nurturing campaigns, you can experiment with changing any number of variables in your content, including:

- Subject lines: Trying out different subject lines could make a dramatic difference in your open rates.

- Layout: Different fonts, images, and other design elements may increase the amount of engagement you see from prospective students.

- Adding images or video: Images and video can add value to your mails, but can also increase load times and make them less likely to be read. A/B tests will allow you to determine if this is a worthwhile step.

- Links and CTAs: Including different links and CTAs might increase click-through rates on your mails, and testing which ones are most likely to entice prospective students could be a good move.

- Email copy: A/B testing can make it easier to try out new things when it comes to your email copy, and experiment with shorter or longer mails, different tones and writing styles, or different approaches to promoting your key messages.

- Send times: You can run A/B tests to determine if you generate better engagement by sending the same mail at a different time of the day or week.

Social Advertising

Because social media advertising offers a range of different targeting, placement, and creative options, it can also be ideal for A/B testing. For instance, you may want to test the performance of Facebook ads on mobile versus their performance on desktop. Likewise, you can play around with the content of your ads, trying out new copy and CTAs, or even testing the effectiveness of different images:

Targeting is a good area to test, too, particularly when considering the range of options available in certain social ad platforms. You can serve the same ads to audiences in different age groups or locations, or to groups with different interests. Not only will this help you refine your targeting, it will also allow you to confirm the accuracy of your student personas.

Example: A sample Facebook audience for an ad campaign. Which of these parameters do you think would be worth changing for an A/B test?

While A/B testing can be very useful in advertising, it’s important that these experiments do not get in the way of your continuous optimization routine. Underperforming ads should be tweaked or replaced on a regular basis, while rotating your ads to keep them fresh is also important. The amount of time necessary to produce accurate results in an A/B test could see you needlessly wasting your budget testing small variables.

Steps to Take Before Conducting A/B tests in Online Student Recruitment Campaigns

Before you conduct any kind of A/B test for student recruitment, it’s important to take a number of steps to ensure its accuracy and effectiveness. First and foremost, you must decide what you want to test. You and your recruitment team should carefully review your marketing materials for improvements that could be made to layout, design, or copy, and brainstorm ideas. Remember, there are no bad ideas in this process, and even the smallest details have been found to lead to huge improvements in results in A/B testing, so try to keep an open mind.

It’s also important to be clear on what your goal is. For instance, you might be looking to improve conversion rates on a specific ad or landing page, or click-through rates on an email. An A/B test requires actionable metrics in order to properly measure its success, so you and your team need to ensure that there is no ambiguity about the desired outcome of the experiment.

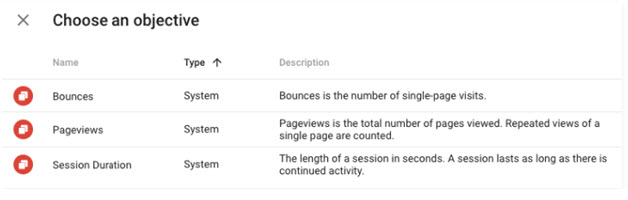

Once you have determined what you are testing, you need to find the right tool to conduct the test. There are many tools for carrying out different kinds of A/B tests, and your choice will most likely be driven by what your are testing and the goal you have in mind. For testing variations of web pages, Google Optimize may be the most suitable choice. A free testing tool which has replaced Google Content Experiments, it offers the advantage of integrating easily with Google Analytics for ease of reporting. Once you link the tool to your Analytics account, it will even automatically import your existing GA goals, as well as standard metrics like bounces, page views, and session duration:

CRM systems with built-in marketing automation functionality often also offer their own native A/B testing tools. Hubspot, for example, allows users to test different versions of forms, landing pages, and emails. These systems are often more intuitive than free options like Google Optimize, and offer some different functionalities.

Example: Hubspot’s intuitive interface allows you to easily switch back and forth between variants as you create A/B tests for landing pages and emails.

Some social media advertising tools will require you to create two separate ads and then compare the results manually to conduct an A/B test, but Facebook Ads Manager has its own built-in split testing tool.

Example: A sample of split test results for a Facebook ad.

Measuring the Effectiveness of A/B Tests

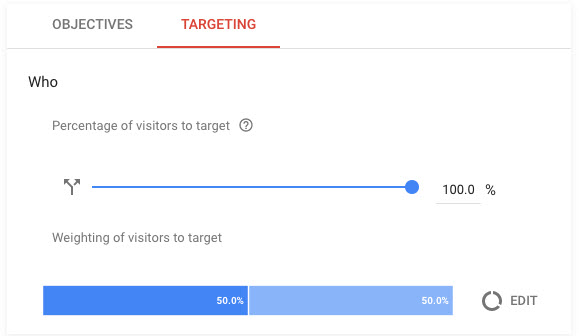

Each of these tools will ask you to set the parameters of your sample group – the portion of your audience that will see the test. If the experiment you are testing seems particularly risky or you are apprehensive about how it might affect your student recruitment efforts, it may be wise to select a smaller percentage of your audience for participation in the test. You can also control what percentage of this sample audience sees the ‘control’ version of the element you are testing and what percentage sees the new version, though for the majority of tests you will want to weigh these equally to ensure the results are accurate.

Example: The visitor percentage and weighting options in Google Optimize. The parameters will be automatically set at 100% with a 50-50 split unless changed.

Lastly, you will need to decide how long to run your test for, and the level of statistical significance you need to achieve. An A/B test that only runs for a short period of time or is only served to a small number of users could produce unreliable results that may not be indicative of future performance. Fortunately, the reporting offered by the tools you use should provide you with data to help you judge the significance of what you are seeing.

Google Optimize, for instance, will give you an indication of just how sure you can be of a test’s viability. Below, you can see that in addition to basic performance data, the tool’s Summary Card page also provides a ‘probability to be best’ and a ‘probability to beat baseline’ score. The first metric is the level of certainty you can have that the winning variant will consistently outperform the other, while the second gives you an indication of the likelihood that your new variant will provide more conversions than the original.

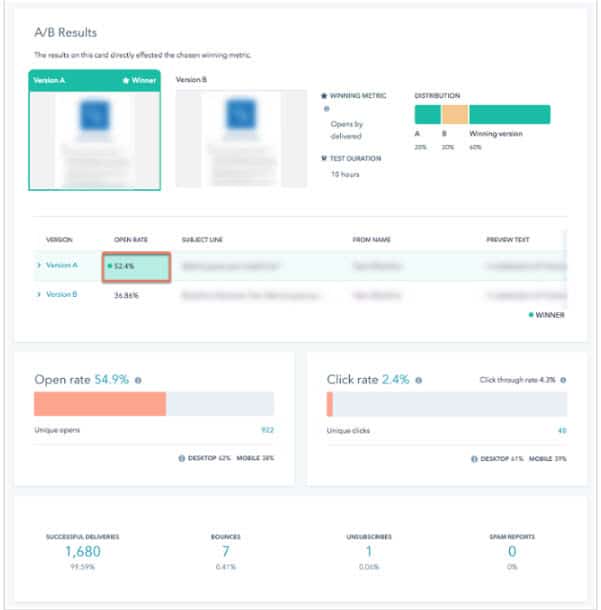

Marketing automation platforms also provide comprehensive reports to help you measure the success of your tests. The below example from Hubspot shows the results of a 10-hour test to measure the open rates of two versions of an email:

While an A/B test can be fairly exact in terms of telling you which variant performs better in achieving your main objective, it can be worth drilling down further into the supplemental data available to see if it changes your perception of the results. For example, the above Hubspot test also provides data on Click Through Rates among other useful metrics. It could be possible that the CTR is higher for the losing variant, meaning that although the mail is more likely be opened, the recipients who open it are actually less likely to click through to your site, which could point to them being less interested in your institution.

Similarly, you can utilize Google Analytics data to get a clearer picture of the accuracy of your information, and Google Optimize will even let you set up to 10 secondary objectives to measure in your tests. This will allow you to measure both completions of specific goals – such as a conversion – and anything else you think might affect that goal, such as the channel a prospective student is coming from, or the pages they visit before they hit the page you are testing.

In addition, it is also helpful to do some qualitative analysis of the leads you are generating from any given A/B test, in order to judge whether the changes you implement are making a real difference in attracting qualified, interested applicants, rather than merely increasing your KPIs. If you can verify this, you can be assured that your school’s A/B tests are definitively having a tangible, positive impact on your student recruitment initiatives.