If you have ever reviewed a marketing performance dashboard and wondered what it actually means for enrollment, you are not alone. In higher education, the issue is rarely the dashboard itself. The challenge lies in what institutions choose to measure and how clearly those metrics connect to the admissions journey.

Many leading universities have already invested in sophisticated dashboards. The University of Toronto publishes interactive “Facts & Figures” dashboards across institutional performance areas, including student data. The University of Cambridge maintains a public-facing Facts & Figures dashboard, while Oxford publishes detailed public facts and figures pages. Institutions such as Cornell also use Tableau-based “Factbook” dashboards to monitor enrollment and admissions activity. These examples show that dashboards are now standard practice.

What remains less common is a marketing dashboard that answers a more operational question: are we on track to enroll the right students, into the right programs, in an efficient way?

Is your dashboard showing enrolment impact?

See what’s actually driving enrolment.

At Higher Education Marketing, the focus has consistently been on measuring performance across the full enrollment funnel rather than isolating marketing activity. The core principle is straightforward. When institutions understand how specific tactics influence movement from inquiry to enrollment, they can improve outcomes at each stage.

This blog post provides a practical framework for building dashboards that reflect enrollment reality, not just marketing activity.

Source: University of Toronto

Source: University of Cambridge

Source: Cornell University

Why Enrollment-Focused Dashboards Fail in Practice

What is a marketing dashboard? A marketing dashboard is a visual, decision-oriented view of key performance indicators that helps your team monitor performance at a glance and take action. In BI tools like Power BI, dashboards are typically single-page canvases, distinct from multi-page reports used for deeper exploration. For higher education, the marketing dashboard becomes truly useful when it includes enrollment-linked outcomes (applications, yield, melt), not only top-of-funnel activity

Most marketing dashboards fail to reflect enrollment reality because they are built around activity, not outcomes. In practice, they tend to fall into three recurring traps.

The first is a trap of vanity. Metrics such as impressions, traffic, and clicks are easy to track and often look positive, but they do not demonstrate meaningful progression through the admissions funnel. A campaign may generate high engagement while producing little impact on applications, deposits, or registrations. Without clear links to downstream outcomes, these metrics create visibility but not insight.

The second is a trap of fragmentation. Data often sits in disconnected systems, with web analytics in one platform, CRM data in another, and admissions outcomes tracked separately. Without shared identifiers or consistent data structures, it becomes difficult to understand how a prospect moves from initial interaction to enrollment. This fragmentation prevents institutions from seeing the full picture and limits their ability to optimize performance across stages.

The third is a trap of misalignment. Marketing teams often optimize toward platform-driven metrics such as clicks or cost per lead, while enrollment teams focus on lead quality, conversion rates, and yield. When these priorities are not aligned, performance improvements in one area may not translate into better enrollment outcomes.

Universities that take dashboards seriously often pair self-service reporting with data governance and shared definitions. The University at Buffalo’s Factbook explicitly references definitions established by the institution’s data governance practices. The university operates a “Factbook” as an official reporting compendium and anchors metric meaning to an institutional data governance process. This method is designed to prevent conflicting definitions across teams by tying dashboards to governed definitions and “official” source-data status.

Source: The University at Buffalo

You can also see the maturity of the dashboard mindset in how institutions describe the purpose of dashboards: UM–Dearborn notes that self-service enrollment dashboards provide shared data to support strategic conversations and decision-making. The university builds self-service dashboards with explicit “shared source of truth” framing, then hardens interpretability by embedding a data dictionary in each dashboard. The split of public vs. internal dashboards suggests a governance approach balancing transparency with deeper internal drilldowns.

Source: UM–Dearborn

A “marketing metrics dashboard” becomes an enrollment dashboard when the metrics and the data model reflect the student journey, from discovery to start date, and when the institution operationalises shared definitions.

The Ten Metrics That Map to Enrollment Reality

Before building dashboards, institutions need metric discipline. The effectiveness of any dashboard depends on whether it reflects how prospects actually move through the enrollment journey. The following ten metrics are designed to align marketing performance with real enrollment outcomes across K-12, language schools, colleges, and universities, with particular relevance for higher education contexts.

Many institutions already track admissions funnel stages in isolation. UBC, for example, describes its admissions dashboards as providing “functional data to assist in the management of the UBC admissions funnel.” The university uses a fixed “as-of” census-style date to stabilize enrollment counts and make time-based comparisons coherent (a key “dashboard governance” method). Access restrictions (VPN/on-campus) indicate these dashboards are treated as internal operational analytics rather than purely public reporting.

Source: University of British Columbia

In the same vein, McGill publishes an enrollment dashboard with multi-criteria filtering and multiple tabs (overview, international by country, department/major), enabling stakeholders to segment enrollment realities without building custom reports. The explicit list of available filter functions serves as lightweight documentation for the dashboard’s analytical “degrees of freedom.”

Source: McGill University

The Metrics Table

| Metric | Why it matters for enrollment | How to calculate it (plain-English) | Typical systems |

| Qualified inquiries by program & source | Inquiries drive the funnel, but only if they align with program, intake, and audience needs | Count unique prospects who meet defined quality criteria, segmented by source | CRM, forms, call tracking |

| Inquiry-to-application conversion rate | Indicates whether leads are viable and whether follow-up is effective | Applications submitted are divided by qualified inquiries for a given cohort or intake | CRM, admissions system |

| Application completion rate | Highlights friction and drop-off during application, often a hidden issue | Applications submitted divided by applications started | Application portal, analytics |

| Offer-to-enroll yield rate | Directly impacts enrollment targets and revenue planning | Yield is the percentage of admitted students who ultimately enrol. | Admissions, student system |

| Deposit-to-start melt rate | Identifies loss after commitment, where targets can quietly erode | Percentage of deposited students who do not enroll at the start | Admissions, student system |

| Speed-to-lead and speed-to-contact | Faster response times often improve conversion and prospect experience | Median time from inquiry to first response, plus percentage reached within SLA | CRM, call, and SMS logs |

| Cost per enrolled student by channel | Aligns marketing with financial outcomes and prevents overvaluing low-quality leads | Total spend divided by enrolled students attributed to each channel | Ads platforms, finance, CRM |

| Content-assisted funnel progression | Shows which content contributes to movement toward inquiry or application | Percentage of conversions where content interaction occurred before action | GA4, CRM |

| Channel contribution under multiple attribution views | Different attribution models influence decision-making and budget allocation | Compare channel performance across attribution models and define a standard | GA4, CRM |

| Local intent actions tied to campus visits/inquiries | Critical for location-based recruitment and in-person programs | Track calls, directions, and clicks from local listings against inquiries or visits | Local listings, CRM |

What differentiates these metrics from generic marketing dashboards is their direct connection to enrollment outcomes. Each metric either represents a measurable stage in the enrollment funnel or sits immediately adjacent to it, making it actionable for both marketing and admissions teams.

The next step is not just tracking these metrics, but operationalising them to support better decisions. Below, we explain how each metric should be interpreted and where institutions commonly misread it.

Metric Definitions and What to Watch

What metrics should a higher education marketing dashboard include? At a minimum, include metrics that map to the funnel stages your institution actually manages: qualified inquiries, inquiry-to-application conversion, application completion, yield, melt, cost per enrollment, follow-up speed, and content-assisted conversion. These align with the idea that schools should measure KPIs at each stage of the enrollment funnel to understand contribution to conversion outcomes.

Defining the right metrics is only the first step. To make a dashboard truly useful, institutions need to understand how each metric behaves in practice, where it can mislead, and what signals to monitor over time. The following breakdown clarifies how each metric functions within the enrollment funnel and highlights what to watch to ensure it drives informed, actionable decisions rather than surface-level reporting.

Qualified inquiries by program & source

This is where many institutions need to mature quickly. A raw “lead” can represent almost anything: a brochure download, a partially completed form, an agent referral, a campus tour booking, or even a spam submission. Meanwhile, enrollment targets are highly specific. They are tied to program mix, intake periods, campuses, and revenue goals.

HEM’s CRM guidance highlights that schools commonly track metrics such as contacts created, website visits, email engagement, and lead acquisition cost. These are useful indicators of activity, but they do not reflect whether a prospect is actually viable. The enrollment-aligned shift is to define and report on qualified inquiries, using criteria such as program intent, geography, academic level, and intake timing. Without this discipline, dashboards overstate performance and make it difficult to identify risk at the top of the funnel.

Inquiry-to-application conversion rate

This is often the first reliable “truth-teller” metric in the funnel. If inquiry volume is strong but application rates are low, the issue is rarely isolated. It may indicate mismatched targeting, weak program positioning, ineffective nurturing, slow follow-up, or friction in the application process itself.

Because this metric sits directly between marketing and admissions, it forces cross-functional accountability. It also provides an early signal of whether campaigns are generating prospects who are both interested and eligible.

Application completion rate

Application completion rate highlights where intent breaks down. Tracking applications started versus submitted reveals friction that is often invisible in high-level reporting. Even small usability issues, unclear requirements, or technical barriers can significantly reduce completion rates.

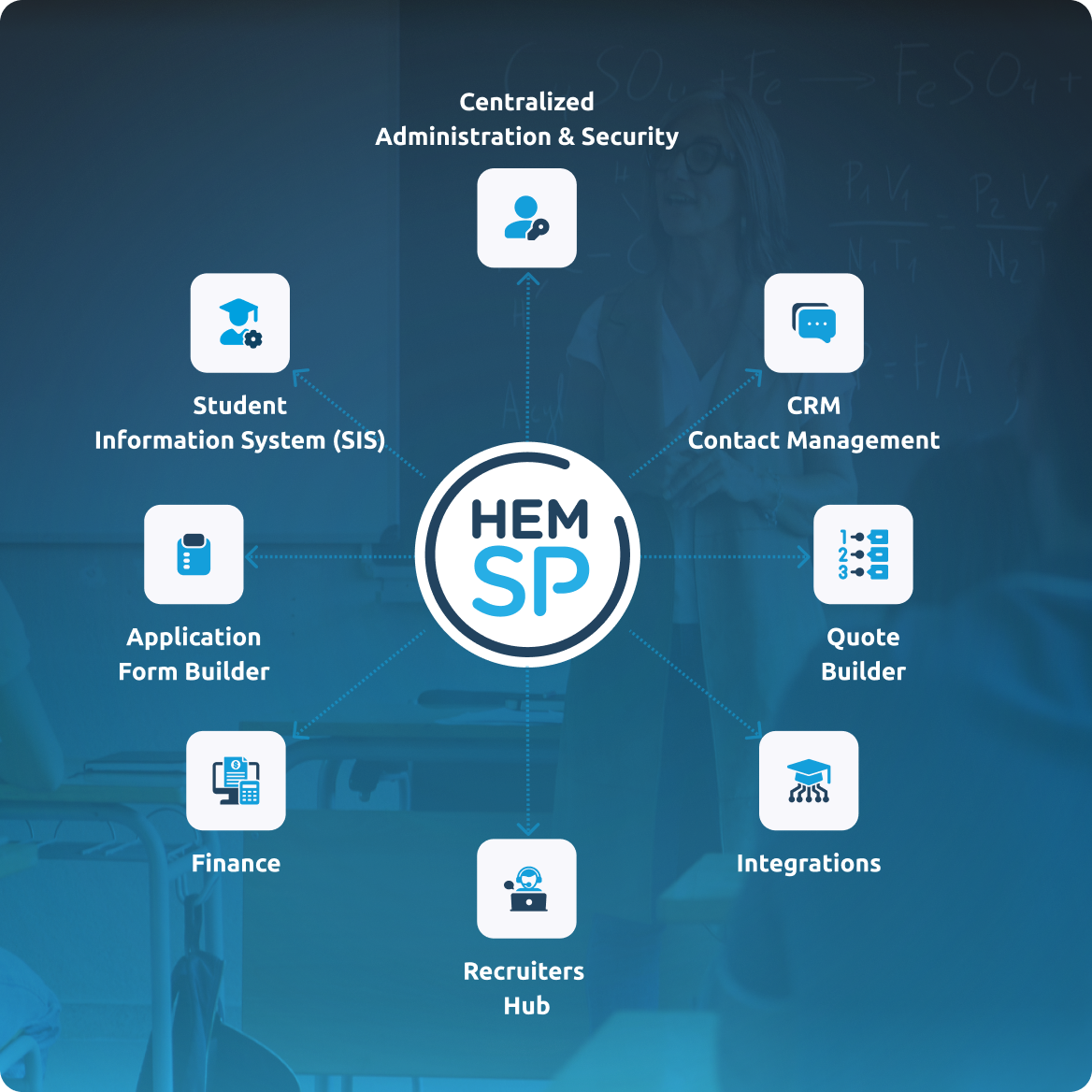

HEM’s student portal documentation notes that institutions can assess applicant volumes and paid applications through “Overview of Applications” reporting. Whether using HEM-SP or another system, this metric sits at the intersection of marketing, user experience, and admissions operations. Improvements here often produce immediate gains in conversion without increasing acquisition spend.

Source: HEM

Offer-to-enroll yield rate

Yield is one of the most important downstream metrics and a key reality check for marketing effectiveness. NACAC defines yield as the percentage of admitted students who ultimately enroll and highlights its importance in managing enrollment targets.

Dashboards should allow yield to be segmented by program, student type, and acquisition channel. This is critical because not all growth is equal. A channel that produces more admits, but results in lower yield, may increase workload while reducing overall efficiency. Understanding these dynamics helps institutions prioritise sources that contribute to stable, predictable enrollment outcomes.

Deposit-to-start melt rate

Often referred to as “summer melt,” this metric captures the loss of students who have already committed but do not enrol. A BCCAT report defines summer melt as students who receive an offer and may pay a deposit but ultimately fail to enroll at the start of the term.

Even if institutions do not use the term “melt,” the operational challenge is the same. These are students who were expected to convert but did not. This represents lost revenue and planning uncertainty. Tracking melt by segment, geography, and program can help identify patterns, such as financial barriers, visa issues, or communication gaps.

Speed-to-lead and speed-to-contact

This is one of the most underutilised metrics in higher education marketing dashboards and one of the easiest to improve. Unlike acquisition metrics, it is operational rather than budget-dependent.

If response times are slow, the impact of marketing investment is reduced. Prospects lose interest, choose competitors, or disengage entirely. HEM emphasises the importance of tracking the full journey from inquiry to enrollment within a unified system. When CRM and web data are integrated, institutions can measure time to first response, contact rates, and follow-up effectiveness by segment. Small speed improvements can have a disproportionate impact on conversion.

Cost per enrolled student by channel

Cost per lead is not sufficient for decision-making. Institutions need to move up the value chain, from cost per inquiry to cost per qualified inquiry, application, and ultimately enrollment.

This requires connecting marketing spend to downstream outcomes through clear attribution rules. It also requires collaboration between marketing, finance, and admissions teams. When done correctly, this metric aligns stakeholders quickly and prevents optimisation toward channels that generate volume but not results.

Content-assisted funnel progression

A content marketing metrics dashboard should demonstrate influence, not just activity. Reporting on blog traffic or page views alone does not indicate whether content contributes to enrollment outcomes.

In GA4, key actions such as inquiry submissions, application starts, or visit bookings can be configured as “key events.” This allows institutions to analyse which content interactions occur before meaningful conversions. Over time, patterns emerge. Certain pages, guides, or videos may consistently appear in successful journeys, indicating their role in supporting decision-making.

Channel contribution under multiple attribution views

Attribution is not a single metric but a set of interpretations about how credit is assigned across channels. Different models produce different conclusions, which can significantly influence budget decisions.

GA4 provides attribution reporting that allows institutions to compare how channel value shifts across models. It is important to note that several rule-based models were deprecated as of November 2023. Dashboards should reflect currently available models and clearly document which attribution approach is used for decision-making. Without this clarity, reporting can become inconsistent or misleading.

Local intent actions tied to campus visits/inquiries

Local visibility plays a critical role in early-stage awareness, particularly for campus-based programs. HEM highlights that platforms such as Google Business listings can act as initial touchpoints and generate meaningful engagement signals.

However, tracking should not stop at impressions or views. Institutions need to connect local actions such as calls, direction requests, and website clicks to tangible outcomes like inquiries, visit bookings, or event registrations. This ensures that local marketing activity is evaluated based on its contribution to enrollment, not just visibility.

A Marketing Metrics Dashboard Template for Higher Education

A marketing metrics dashboard becomes valuable when it is structured around decisions rather than departments. In higher education, where multiple teams contribute to enrollment outcomes, the dashboard must provide a shared view of performance that supports action, not just reporting. A layered structure works particularly well in governance-heavy environments because it allows different stakeholders to access the level of detail they need while maintaining alignment.

At the top level, an Executive Enrollment View should function as a one-screen reality check. This view brings together the metrics leadership cares about most, including targets versus forecast, inquiries, applications, offers, yield, melt, and cost per enrollment. It should also highlight emerging risks, such as underperforming programs or declining conversion rates. The goal is clarity and speed. Decision-makers should be able to assess whether enrollment goals are on track within seconds.

Beneath this, a Funnel View by program and intake provides structure. This view tracks movement from inquiry through to enrollment, showing conversion rates at each stage. It allows institutions to identify where prospects are stalling and whether issues are isolated to specific programs or cohorts.

A Campaign View connects marketing activity to outcomes. It should include spend, reach, clicks, cost per inquiry, and cost per enrolled student, broken down by channel and campaign. Any campaign that cannot be tied to at least an inquiry should be reviewed or deprioritised.

A Content View focuses on influence rather than traffic. It highlights which content contributes to key actions, such as inquiries or applications, and tracks engagement signals like program page visits, downloads, and webinar attendance.

A Local View captures geographically driven demand. It includes campus-level interest, regional lead sources, local search actions, and conversions tied to visits or events.

Finally, a CRM and Follow-up View ensures operational accountability. Metrics such as speed-to-lead, contact rates, SLA compliance, and lead ageing reveal whether prospects are being managed effectively after initial capture.

This structure is not about design. It is an accountability system that forces institutions to confront performance across the full enrollment lifecycle.

Building a Dashboard That Ties Marketing to Enrollment

To answer the practical question of how to build a dashboard that truly connects marketing to enrollment outcomes, institutions need to think in three layers: tracking, modelling, and governance. Each layer plays a distinct role, and weaknesses in any one of them can undermine the entire system.

Tracking: Define key events and enforce tracking hygiene

Effective dashboards start with reliable tracking. In GA4, important actions such as inquiry submissions, application starts, or visit bookings can be marked as key events, allowing them to be measured consistently across channels. This creates a foundation for understanding how users move from initial engagement to meaningful action.

However, simply enabling tracking is not enough. Institutions need a clear tracking QA process. This includes validating that UTM parameters are applied consistently, confirming that key events are firing correctly, ensuring consent settings are properly configured, and verifying that form submissions pass clean and usable source data into the CRM.

Google’s URL builder guidance explains that UTM parameters allow Analytics to attribute traffic to specific campaigns. In practice, this only works if naming conventions are standardised and enforced across teams. Without consistency, reporting becomes fragmented and unreliable.

Modelling: Connect identities without breaking privacy rules

Once tracking is in place, the next challenge is connecting data across systems. This is where many dashboards fail. If a prospect’s web activity cannot be linked to their CRM record, the connection between marketing and enrollment is lost.

At the same time, institutions must operate within strict privacy constraints. Google’s Analytics policies explicitly prohibit sending personally identifiable information, such as email addresses or phone numbers, to Analytics. Poorly configured URLs or redirects can accidentally expose this data.

For Canadian institutions, privacy compliance is essential. Depending on the institution and jurisdiction, this may involve provincial public-sector privacy laws, and in some situations, PIPEDA. CASL also requires consent and clear opt-out mechanisms for commercial electronic messages.

A robust dashboard approach should therefore rely on privacy-safe identifiers, consent-aware tracking, and minimal exposure of personal data.

Governance: Standard definitions and reporting discipline

Even with strong tracking and modelling, dashboards can fail without governance. Definitions often vary across teams. “Enrollment” may refer to deposited students, registered students, or official census counts.

Leading institutions address this by documenting definitions and reporting rules. UC Berkeley, for example, ties enrollment data to a specific census point in the academic term. UBC similarly defines reporting cut-offs based on registered students on a fixed date.

To maintain clarity, institutions must document what enrollment means in their context, understand data lag between systems, and define a single source of truth. Without this discipline, dashboards risk becoming inconsistent and difficult to trust.

Final Thoughts

A marketing dashboard only becomes valuable when it reflects how enrollment actually happens. That requires more than adding charts or increasing data volume. It requires clarity in what is measured, consistency in how it is defined, and alignment across teams responsible for moving prospects through the funnel.

The institutions that see the most value from dashboards are not necessarily those with the most advanced tools. They are the ones that connect marketing activity to admissions outcomes, enforce shared definitions, and use data to support decisions rather than justify activity.

How do you build a dashboard that ties marketing to enrollment? Define key events and enforce clean campaign tagging so you can attribute inquiries and applications across channels. Connect CRM and admissions outcomes using privacy-safe identifiers and ensure you are not sending PII into analytics systems. Then operationalise shared definitions and reporting cadence, as institutions do when publishing enrollment or census-based reporting.

When dashboards are structured around the enrollment journey, they shift from reporting tools to operational systems. They highlight risk earlier, clarify where investment is working, and expose where prospects are stalling. The goal is straightforward. Not better dashboards, but better decisions about how to reach, convert, and enroll the right students efficiently.

Is your dashboard showing enrolment impact?

See what’s actually driving enrolment.

FAQs

What is a marketing dashboard?

A marketing dashboard is a visual, decision-oriented view of key performance indicators that helps your team monitor performance at a glance and take action. In BI tools like Power BI, dashboards are typically single-page canvases, distinct from multi-page reports used for deeper exploration. For higher education, the marketing dashboard becomes truly useful when it includes enrollment-linked outcomes (applications, yield, melt), not only top-of-funnel activity.

What metrics should a higher education marketing dashboard include?

At minimum, include metrics that map to the funnel stages your institution actually manages: qualified inquiries, inquiry-to-application conversion, application completion, yield, melt, cost per enrollment, follow-up speed, and content-assisted conversion. These align with the idea that schools should measure KPIs at each stage of the enrollment funnel to understand contribution to conversion outcomes.

How do you build a dashboard that ties marketing to enrollment?

Define key events and enforce clean campaign tagging so you can attribute inquiries and applications across channels. Connect CRM and admissions outcomes using privacy-safe identifiers and ensure you are not sending PII into analytics systems. Then operationalise shared definitions and reporting cadence, as institutions do when publishing enrollment or census-based reporting.